- Written by Compudata

- Published: 12 Jul 2019

How would you feel if a video of you appeared online, saying things that you never actually said? While you may not be the one targeted by this kind of action, this situation is very real, thanks to a relatively recent development in machine learning and artificial intelligence. Known as “deepfakes,” these videos have made it crucial to examine media from a place of skepticism.

What is a Deepfake, and How Are They Made?

A deepfake is a fabricated video that makes it possible to put words in someone’s mouth. As demonstrated by multiple research teams, while this technology is not yet perfect, it can be used to create a compelling video - or an even more convincing still shot.

Deepfakes can be made using a combination of techniques and tools.

Video Deepfakes

Using a specialized software solution, a video is scanned to identify the phenomes (the different sounds that make up full words) that are vocalized. Once they are identified, the phenomes are matched with the facial expressions that produce those sounds (also known as visemes). A 3D model of the subject’s face is then built based on the original video.

With the right software solution, these three factors can be combined with a transcript to create new footage and superimpose it over the original, making it appear that the person depicted is saying something that they never said. This creates a video that is just different enough to be disconcerting.

A similar method, that relies on mapping the expressions a person makes in source footage and applying them to a second person’s face, can even be used to bring paintings and old photographs to life.

Still Image Deepfakes

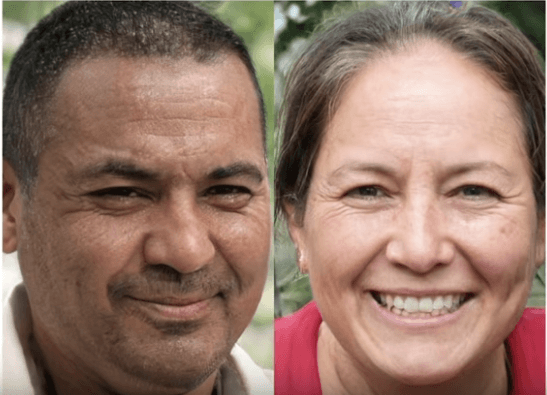

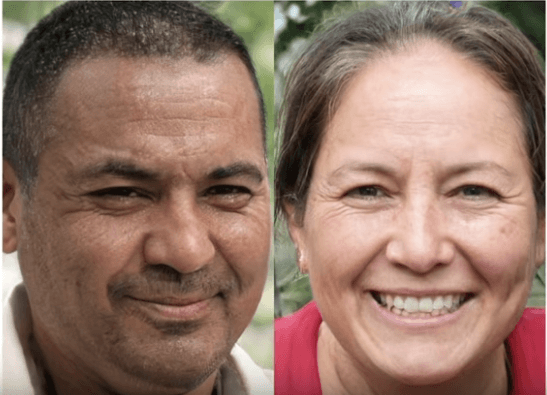

While the still images of people that were produced by AI were initially low-quality and generally unconvincing back in 2014, today’s - just five short years later - are effectively indistinguishable from the real deal. This is thanks to a technique known as a generative adversarial network. Using this technique, one AI generates images of people’s faces, anticipating feedback on how it did. In order to reach the desired level of photo-realism, it could potentially take millions of repetitions - something that nobody has time for.

Instead of subjecting a human being to the process of critiquing millions of images, a second AI is used to guess whether the picture was actually created by the first AI, or is a legitimate picture. While neither is particularly effective when first starting out, they swiftly improve in their capabilities over time, and can soon make images that are effectively indistinguishable from actual photographs of real people.

Neither of the people pictured here actually exist - they were instead created by NVIDIA in one of their machine learning AI initiatives.

As a result, we can see a dramatic rise in the capability for people to spread falsehoods and generally make the Internet a misinformative place.

How Misinformative?

Let’s look at a relatively recent example of how impactful altered video can be. In May of this year, video of House Speaker Nancy Pelosi blew up on social media that made her appear to be making a speech while intoxicated. Edited to make her sound as though her words were slurred, the original footage had been reduced to 75 percent of its original speed, with the Speaker’s voice adjusted to make it sound more like her natural pitch. This wasn’t the only instance of this happening, either. In addition to this speech, originally delivered at an event for the Center of American Progress, Pelosi’s voice was also manipulated to make her, again, appear drunk as she spoke to the American Road & Transportation Builders Association earlier in May. A similar claim was made in yet another video posted last year.

Regardless of your political views, it cannot be denied that this kind of activity is dangerous. While there have not yet been deepfakes deployed as a part of a disinformation campaign, the Director of National Intelligence Dan Coats alerted Congress to the possibility that America’s rivals could leverage them in the future.

Take, for example, this practical demonstration provided in a TED Talk by Google engineer Supasorn Suwajanakorn. Using footage of President George W. Bush, Suwajanakorn was able to create maps of different public figures and celebrities and have them speak on his behalf. He was also able to develop four different models of President Barack Obama that all appeared to make an identical speech.

Now, Suwajanakorn is working on a browser plugin to help natively identify deepfake videos and stop the spread of disinformation.

How Could This Impact Your Business?

Sure, global politics and pop culture are significant to many people, but how much of a threat could it really be to your business? Unfortunately, it may not be long before it could have a significant impact on you.

Let’s say you have a customer or client who, for whatever reason, left your services feeling disgruntled. Someday soon enough, deepfake technology could be accessible enough for them to leverage it as part of a smear campaign against you and your company. Consider Speaker Pelosi’s ongoing struggle with people using rudimentary deepfakes to discredit her. Do you want similar efforts to be leveraged against you, where you are misquoted or misrepresented, whenever this technology becomes widespread?

Probably not.

In the meantime, there are plenty of other threats that you also have to contend with that could easily damage your business and its reputation just as much as some fabricated video footage could. Compudata can help to protect your operations from these threats. Give us a call at 1-855-405-8889 to learn more about what we have to offer.

Comments Off on Deepfakes Make It Hard to Believe Your Eyes or Ears

Posted in Blog, Miscellaneous

Tagged Communication, Miscellaneous, Users